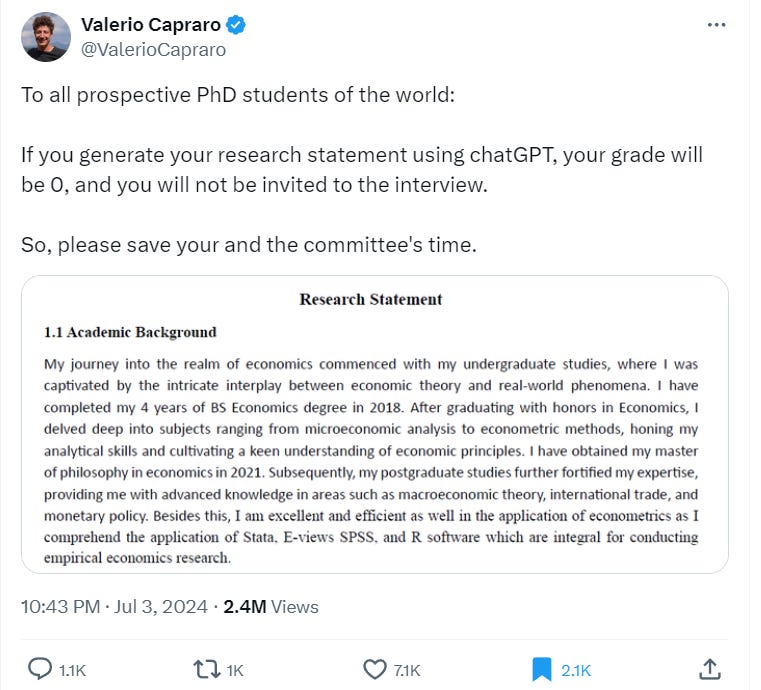

Let's delve into exploring the rich and dynamic tapestry of AI plagiarism or: You're not an AI detector

The academic who posted this is, I am sure, a good-faith actor who thinks that he is sending a valuable signal to would-be AI plagiarists. Unfortunately, he has, inadvertently, done unjustified harm to this student, both in rejecting the extract as AI plagiarism when it most probably is not and in publishing the extract on Twitter with an accusation where the student might conceivably see it and feel humiliated.

This fragment is not obviously written by ChatGPT despite the Tweeter’s assertions. On the contrary, we can, with some confidence, rule out ChatGPT as the author. How? The best clue is that it contains multiple grammatical errors and is clumsy in a way ChatGPT isn’t. Of course, ChatGPT might have assisted in some way, or written some sentences of this passage- it’s almost always impossible to rule this out- but I see no particular evidence of that. It is also possible that some or all of the rest of the statement was written by ChatGPT- but the author’s implicit assertion that this passage is clearly ChatGPT generated is not true. It appears to me to have been written by an ESL author trying their best in a second language.

For reference, I had ChatGPT-4o rewrite the passage:

My academic journey in economics began during my undergraduate years, where I was fascinated by the dynamic relationship between economic theories and real-world applications. I earned my BS in Economics in 2018, graduating with honors. My studies included a thorough exploration of microeconomic analysis and econometric methods, which sharpened my analytical skills and deepened my understanding of economic principles. I continued my education by pursuing a master's degree in economics, which I completed in 2021. My postgraduate studies further enhanced my expertise in macroeconomic theory, international trade, and monetary policy. I have a strong proficiency in econometrics and am well-versed in the use of statistical software such as Stata, E-views, SPSS, and R, which are essential tools for empirical economic research.

If ChatGPT had written it from scratch, I suspect it would have been even less flowery.

Many academics would like it if the royal sign of ChatGPT were bad writing in every sense of the term. This would be convenient for them, because:

It would make ChatGPT easy to spot.

It would make the stakes lower- ChatGPT material is only the obviously bad submissions which they would have given a low grade anyway.

It would increase the distance between what academics and academically trained people can do and what LLMs can do, thus reducing the terror of replacement.

In truth, a lot of the markers that discriminate between good and bad writing at an undergraduate level (grammar, fluency, etc.) were among the first things language models cracked, and remain their strongest areas.

What about the use of “ChatGPT words” like delve, interplay and fortify? Don’t these show that the extract was likely generated by AI? Here we have to ‘delve’ into the origin of ChatGPT words. My understanding is this. ChatGPT was created, in part, using feedback by English-speaking Nigerians (Reinforcement Learning through Human Feedback- RLHF). “Delve” and many other “ChatGPT words” are used frequently in Nigerian English.

There can be a tendency for people who speak English as a second language fluently and are rightly proud of the vocabulary they have mastered to use more ‘big’ words than native English speakers. Many have been falsely dinged as AI plagiarists for this reason. [big words are not always bad writing despite Orwell’s moralism].

The Tweet author later made an argument from font choice:

Critique: I cannot reject a candidate because I believe their application is AI-generated, as it’s impossible to know for certain whether a text is AI-generated. It’s true that no algorithm can tell for sure if a text is AI-generated. However, we are discussing a specific piece of text, not a general one. The text contained dozens red flags, including words typically used by ChatGPT (intricate, delve…) and a suspicious change of font in the last sentence. Additionally, the statement continued in this manner for three pages, displaying red flag after red flag. The strongest indicator was the shape of the apostrophes: standard Calibri font has round apostrophes, GPT-generated text has straight ones.

I’ve already dealt with word choice. Regarding apostrophes and other aspects of font, this is weak circumstantial evidence, sure, but there are plenty of text editors that the student might have copied in and out of a Word document that would generate this result.

I have heard some people suggest that if the student has a writing style that sounds a bit AI-y, it’s up to the student to notice this and alter their style. This is so ridiculous that I almost cannot be bothered with it but in brief: (1) A lot of students aren’t chronically online enough to have heard the various claims and counterclaims about what AI sounds like (2): It’s an extreme ask on anyone to change their entire writing style or be dinged as a plagiarist. (3) Since a lot of those fingered as possible AI plagiarists are ESL speakers, it’s a discriminatory ask on a disparate impact test. Many features of AI writing are bad and it’s fine to encourage students to avoid these features in their own writing for quality reasons- but there should be no implication they are plagiarists if they do not.

Administrations need to step up and educate educators on the dangers of thinking they can detect AI writing. Some people, I’m sure, can safely detect AI writing, but most faculty are not among them, and, critically, most people who think they can safely detect AI writing are not among them either. These misconceptions create the risk of a discrimination lawsuit, and more importantly, are morally wrong. Enough students have been wrongly failed, harassed, and defamed. False accusations of cheating, even if they are fortunately defeated, cause grave stress and harm which will never be compensated.

AI detectors, e.g. as sold by Turnitin, are snake oil and discriminate against ESL students. There is no way to detect AI reliably using machines, and even if a strategy is developed it will soon be circumvented.

Nor do I have any respect for academics whose solution is to talk about innovative new assessment techniques that circumvent AI in vague terms without spelling out what they are. One of my least favorite types of academic talk is rabbitting on about your innovative new techniques and methodologies without A) putting anything on the table made through your methodological innovations or B) Even spelling out your ‘innovations’ in detail. I find this approach particularly contemptible in pedagogy. Some lecturers have found innovative assessment strategies that work for their courses and avoid AI- at least for the moment- that’s great! But the concept of “using innovation” is not, itself, an innovation!

There are four defensible main options as of now I am aware of for dealing with AI plagiarism in college courses where the primary output is writing:

Use in-class assignments.

Require the student to provide version history with their submission.

Claim that you have ways and means of detecting ChatGPT, put the fear of God into the students, and then take no action because you can’t actually tell.

Set the standard so high that AI can’t pass it, or at least can’t get a good pass (appropriate for some grad and upper-level undergraduate courses, etc).

Eventually, AI will be able to fake (2). (3) won’t work forever, and AI will just keep getting better and better- this also rules out (4) in the long run. Unless something drastic emerges, eventually all assessments will have to be in-class exams or quizzes. This is terrible, I won’t pretend otherwise, students will never learn to structure a proper essay and the richness of the world will be greatly impoverished by this feature of AI- not least of all because writing is one of the best ways to think about something. However, pretending you have a magic nose for AI that you most likely don’t have won’t fix this situation.

Justice, always and everywhere, no matter the level, means accepting the possibility that some (?most) wrongdoers who think before they act will get the better of the system and prove impossible to discover and convict. The root of so much injustice in sanctions and punishment is here, in an overestimation of our own ability to sniff it out, in turn, born of a fear of someone ‘getting the better of us’. But the bad guys often do get the better of us, there’s nothing more to be said.

More generally, people have always overestimated their capacity for peering into the human heart. Witness the proliferation of ‘empaths’ online. The sense that you just tell if something is written by AI - that you can see if there is a soul behind a block of text - is just an extension of this same sort of foolishness. A kind of inversion of anthropomorphism.

I've seen college professors instituting a system where the take-home essay is followed by an oral examination without any notes, a kind of debriefing, asking questions about the reasoning behind such or such part of the essay. Seems like a decent system; it ensures that even if the student used AI, they actually understand what the AI spat out on a more than surface level.

I'm really hoping ChatGPT wrote this blog post