The curse of Bear Council is upon the Australian National University

Beatrice Tucker was expelled from the Australian National University for the following comments.

“Hamas deserve our unconditional support. Not because I agree with their strategy – complete disagreement with that – but the situation at hand is if you have no hope … nothing can justify what has been happening to the Palestinian people for 75 years.”

To my mind, the only potentially compelling argument for expelling Tucker is that she was, by these words, endorsing the deliberate killing of civilians. If Tucker had said, for example, “I support the killing of Israeli civilians as a deliberate strategy” then I would support expelling or disciplining her on the basis of these words. I do not think this is equivalent to what she said. The bolded bit makes it quite clear this is not the intention of the speaker, and even without the bolded sentence, the case would be speculative.

The strongest reason against her expulsion is this. She would not have been expelled if she had been talking about the Israeli government in exactly the same terms:

“Israel deserves our unconditional support. Not because I agree with their strategy – complete disagreement with that – but the situation at hand is if you have no hope … nothing can justify what has been happening to the Israeli people for 75 years.”

I believe the Israeli government is currently doing about 2 to 3 orders of magnitude more illicit harm than Hamas, so this lack of symmetry in favor of the Israeli government is unjustifiable. Even if you think the matter is debatable by reasonable people you should find the lack of symmetry worrying.

Having known plenty of people like the speaker, I know what her likely position is. The killing of civilians, especially when it is not accidental, is terrible. However, the largest crime by a lot being committed in the area right now is the oppression and killing of the Palestinian people. Focusing on condemning the sins of Hamas takes away attention from this larger, more pressing issue. I do not think this is a position anyone should be expelled from a university for.

What about the argument that Hamas is a proscribed terrorist organization? It is certainly true that Hamas is led by nasty pieces of work. However, I do not think we should outsource our moral reasoning about what positions are permissible to the Australian government. Perhaps I will reconsider if the government ever lists Likkud as a terrorist organization. Serious people do not use government lists as a decisive moral argument. Serious universities do not treat themselves as arms of the foreign policy establishment, especially when they are under no legal obligation to do so.

The crux of the matter for many people is the phrase “unconditional support”. Not that it really changes the matter at hand- one is accountable for what one says- but I have a strong suspicion about what’s going on here. The speaker is likely a Trot/Trotskyist/Trotskyite of the Cliff/Cliffist/Cliffite tendency. Phrases like “unconditional support”, “military support”, “political support” etc. take on a bit of a theological status in these circles and do not necessarily mean exactly what their common sense would suggest. Unconditional but critical support is the full phrase. Here John Molyneux from the Cliffist International Socialist Tendency clarifies the meaning of the phrase:

However, our attitude to the ANC is only one example of a general stance – unconditional but critical support – which Marxists take towards numerous movements round the world today. For example we support the Sandinistas in Nicaragua against US intervention and the Contras but criticise their alliance with the Nicaraguan bourgeoisie and their maintenance of capitalism. Another example is the IRA, who we support against British imperialism and the Orange reactionaries but criticise for their reliance on terrorism and failure to mobilise the working class.

Thus, there is no fair-minded basis to expel this student because:

There is no clear evidence of the student supporting the deliberate killing of civilians. There is some unfortunate phraseology, but the meaning is clear given the broader context of what she said, and (for what it’s worth) the broader context of her tendency’s intellectual history.

It is unlikely that someone making the opposite claim but on behalf of Israel would be expelled.

In the vast majority of cases, it is wrong to expel someone from a university for speech.

This is not an unfortunate act- done now and dispensed with. This is a point that isn’t made often enough in relation to lots of sorts of wrongdoing- especially institutional wrongdoing. When for example, a company unjustly fires someone (like the bizarre case of the man fired for making an okay symbol Emmanuel Cafferty) they continue to participate in that wrongness as long as they could fix the damage they have done, but do not. Even if years pass. The university continues right now to participate in the wrongness of this act against Beatrice Tucker. Every day they have the opportunity to correct this, and do not, they effectively do it again.

Thus, with reference to the damage done to Tucker, and the collective harms against free thought, the Bear Council must reluctantly raise its paw.

Until such time as Beatrice Tucker is:

Reinstated as a student.

Apologized too.

Compensated for such financial disadvantages as may result from this decision

The curse of Bear Council is upon the Australian National University. Bear council calls upon the angels of justice to punish them in a thousand ways. All the best students who were going to go there for grad studies will get better offers elsewhere, and so too all those thinking of joining the staff. Cockroaches will swarm the office of the Vice Chancellor. Hayfever will be upon those of the University Senate who do not oppose this act. All the administrators, academics, managers and executives complicit in this act will suffer insomnia and terrible guilt until they repent. The awareness of this act will continue to resurface again and again. The finances of the University will spiral out of control. The students will turn against their own university, as will the alumni. The spiritual void in all those who cooperated with this decision will be revealed to the world. The wrath of all the good people will fall upon them. Memes shall be made depicting them as the virgin and Beatrice as the Chad. Myriad other irritations, regrets, punishments, incompetencies and incapacities will afflict them. People will stick bubble-gum everywhere over campus.

May it be so.

There may not be a spiritual reckoning

As long and short-term readers of the blog know, I take the possibility of an AI apocalypse (in the sense of the ending of this particular phase of humanity) pretty seriously, along with a bunch of other apocalyi. I think I’ve figured out one reason why a lot of people reject the idea (out of many reasons, of course). When they imagine the end of it all, they imagine a spiritual confrontation and crisis. A moment of grappling with the sins of our civilization- for example, a moment where people wake up, possibly too late, to global warming.

These social critics look at tech billionaires and see their banal sociopathy. They look at awful AI booster accounts on Twitter, often nearly as soulless as crypto booster accounts. They look at all this and say “hey no, it can’t end like this, with nothing shown and nothing proven”.

Consider this passage from Helen De Cruz:

Old and established robust political views that worked well in the past do not seem to be working anymore. The liberal consensus has been shattered, there is a plethora of postliberal ideas fomenting in different corners of society. Political parties are by and large devoid of vision. Hovering up the bigoted anti-immigrant vote seems to be the main game, no ambition whatsoever to be clear-eyed about what a future could look like other than more of the same and Manichean posturing. Zombie ideas, which were never good to guide policy continue to reign, while good ideas fail to be adopted. There is no solace to be found in the billionaire ideology du jour either, longtermism/TESCREAL and related eugenicist and utilitarian visions of the future. Putting our hopes in AGI, which may prove our salvation, or may spell our doom, a constellation of religious-like ideas based on pseudoscience, will not help us to flourish.

I have a lot of respect for De Cruz’s writing, but here I think, I sense an error. Reading between the lines, admittedly not stated explicitly, the idea that AI will not go boom is linked too tightly to the ideological vacuity of the oligarchs pushing AI development. In truth, the moral, political, and intellectual bankruptcy of the tech CEO set and the tech boosterist set provides no such guarantees.

I think a lot of people I respect have mixed, even without their knowledge, the idea of a spiritual reckoning into their vision of the end of this age of humanity. It won’t necessarily be like that! It could be, for example, someone releasing an extremely deadly pathogen, then industrial civilization dying. It could be a nuclear war over the stupidest misunderstanding imaginable. The end of our age won’t necessarily reveal the best of us, it might not even reveal the worst of us, it might just… happen. History doesn’t have to climax as a novel does, and the Trotskyist idea that history only ends when we get the politics just right to immanentize the proletarian eschaton is an intellectual’s false hope. There’s a Jewish idea I’m quite enamored of that the generation before the eschaton will either be the most righteous, or the most wicked- this is a misconception with ancient roots, but a misconception only.

In which I rant about our job market

It looks like I’ve finally got a part-time job (I could still really use paid subscriptions though!). Two comments on the process:

Most jobs I applied for required me either to certify that I had never been found guilty of misconduct at a previous job, or disclose and describe prior findings if there were any. Some even required a statutory declaration to this effect. Fortunately, I haven’t! But this is absolutely fucked. In Australia, it’s illegal to reject someone on the basis of criminal record in most circumstances- a policy I very much agree with. In criminal cases, people we trust (for the most part)- judges and juries- are in control. Also, the standard of proof is beyond reasonable doubt. Most importantly, the standard of what counts as wrongdoing is higher.

A prior finding of misconduct against an employee can be some fuckwit who owns a convenience store. It can be some boss from hell at a large corporation. The rules of evidence are lax. The arbitrators represent company interests rather than social interests. If you can’t force people to disclose criminal history without a good reason, then it seems completely fucked to weigh people down with misconduct findings for life, or more likely just create a culture of lying about it.

The strategy seems to be to get employees to state, on the record, that they have had no previous difficulties along these lines, giving the company the opportunity thereafter to fire them in perpetuity for having lied during the application process if that information comes to light. As someone who was almost disciplined for forgetting things in a previous role when my ADHD was poorly controlled this is close to my heart.

A friend of a friend who works as an auditor (and therefore needs everything in her record to be ship shape) was rejected from job after job because she had a previous misconduct finding for speaking out against bullying. Employers didn’t even bother to find out what the misconduct finding was- they simply didn’t hire her because she had one.

Bear Council is concerned by this situation and will continue to monitor it as it develops.

Finally, it seems like it’s just normal for hiring processes to take four to six months now. That’s not good enough! It’s at the level where it’s a serious cost to the public purse, and likely a serious factor inflating the unemployment figures. This is becoming a problem for public policy. All the more reason to raise the dole to the poverty line. In a world where even in a tight job market finding work takes six months, it’s the least we can do.

You should read this

https://ericneyman.wordpress.com/2021/06/05/social-behavior-curves-equilibria-and-radicalism/

Nihilism

There’s a larger and larger genre of people on Twitter fantasizing about killing numerous innocents, or even children, to save the lives of their own children. The most recent cases are in relation to the Gazan conflict, but this is an old (but growing) genre, and extends far beyond such cases:

An idea seems to have developed: The my-family exception to morality. I’ve encountered it myself in much less drastic cases- working in an emergency department as a receptionist, it was abundantly clear that many people who would never yell at a receptionist, nurse or doctor if they were kept waiting felt justified when it was their child or parent. This is an understandable lapse so long as one doesn’t try to pretend it makes one praiseworthy.

One of the factors that makes the “my family” exception to morality so totally unacceptable is its flexibility. There’s scarcely a wrongdoing that can’t be justified by it. I need to do this grubby thing so I can keep my job for my family. I need to shoot first and ask questions later so I can go home to my family. I need to not breach the door at Uvalde so I can go home to my family. I need to accept this bribe for my family. I need to deny refugees shelter because it’s in the best interests of my family.

Don’t get me wrong, you absolutely should make sacrifices for your family- morality demands it- you should sacrifice of those things which are yours to sacrifice. You don’t get to sacrifice other people.

If you’re proud of the fact that you would kill numerous innocents to save the life of your own, we have a word for you- nihilist. You do not, in the final instance, believe in anything beyond the contingencies of your own specific attachments. We’ve understood for hundreds, if not thousands of years now that one simply cannot build a society on this basis. One of our proudest achievements was taming people like this. In the final instance, in the longest arc of history, people like this will always lose because they cannot, by definition, unify their interests behind a common cause they agree takes priority.

An aside on working in the emergency department

One time I was working in the emergency department late at night when a delirious young man from Colombia came in by ambulance. He was ranting about “the pale woman with the blue lips” who had kissed him, and then everything went dark. He seemed oblivious to all around him, and he himself was deathly pale. We’re all in agreement this was 100% a vampire right?

Hereditarian Noblesse Oblige?

Beria, who now comes in woke form, makes the following point:

To this I would add, that if you are a hereditarian, nothing is stopping you from using this as a reason to help poor people now. So often I have seen (usually politically conservative) people say “Why won’t the left acknowledge that a contributing factor to the plight of some but not all poor people is their lack of intellectual firepower, which makes it difficult to get and hold a job, and to navigate this confusing modern world. If only the left would acknowledge this, we could try to help poor people on the basis that they often lack intellectual privilege.”

I have good news! You don’t have to wait until the world agrees with you on the causes of poverty to start campaigning for anti-poverty measures, like unemployment payments, basic incomes etc.

You don’t even have to wait till the world agrees with you on hereditarianism to say things like: “One good reason to support welfare payments is that the modern world is complex. Even simple jobs are increasingly difficult. Some people simply can’t cope intellectually”. This is a valid point regardless of whether the causes are hereditary!

For myself, I believe that intelligence is heritable (though, important to clarify, not along racial lines). I have never found this even marginally relevant to the work I do in advocating for higher welfare payments. I think it is an important point that the modern world is just too fucking difficult for some people. This simple point is overlooked by both the left and the right but doesn’t depend on how we partial out intelligence between environment and genes. Further, while lack of intelligence is one important cause of this world being much too difficult for some people, it is not the only one!

Even more bluntly I do not think there are many people out there who are spring poised to start advocating for redistribution once the hereditarian causes of IQ and genetic privilege are finally acknowledged. I think, on the contrary, that if hereditarian discourse were more common, people would use it as a shield against redistributive claims. “We’d better not, it might be dysgenic”. I do not think, in the main, that we live in a world where people are dying- just dying!- to help, if only the debate were exactly to their taste

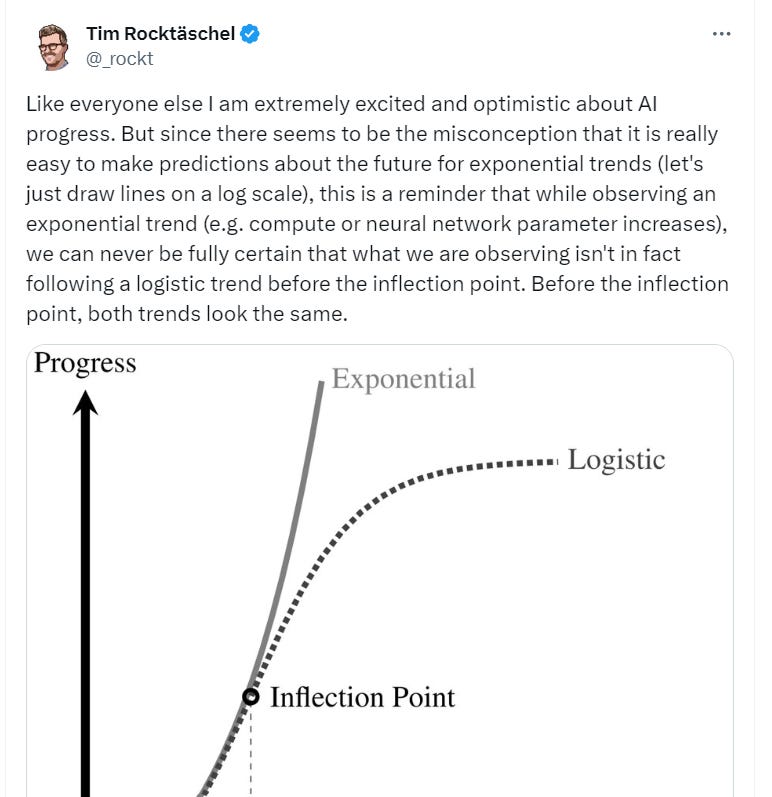

Of inflection points

During COVID, a lot of people didn’t understand exponential growth. You might remember people saying junk like “It’s only a thousand cases, that’s less than 1/300,000th the United States population

In the debate about how good AI will get, a lot of people are making a much more sophisticated point, namely that a logistic curve looks like an exponential curve until it doesn’t.

Fair enough! I agree. The curve could bend. They do that. We don’t know. It seems overwhelmingly likely that at some point the process of building systems able to handle more and more tasks will hit a limit.

But a lot of people seem to have covertly added the following illicit premise:

The inflection point will come before the point at which AI can do [something I’m worried about like replacing most white-collar workers. ]

The truth is, we (me and at least most of my blog readers) don’t know. The curve could have bended with GPT 3. The curve could be bent before we get to the reliable performance and non-insanity needed to replace most call center operators. The curve could bend when we can replace some types of lawyers but not others. The curve could bend after the engineering expertise necessary to produce a vast, efficient Dyson Swarm but before the capabilities needed to build a Dyson sphere. The curve could bend after the intellect necessary to create the void-fluxer manifex engine, but before the intellect necessary to manifest the archon of high eschatonics. I dunno.

Now some of those are more likely than others! To be sure, to be sure. But “curves can bend” is a reason for uncertainty, not smug confidence.

I’m going to give my best guess here to keep myself honest for posterity. I think that progress primarily driven by scaling will be seen twenty years from now as having hit significant limitations after about the introduction of ChatGPT. This is not to deny there was tremendous progress after that point, or even to deny that there might be critical progress largely driven by scaling in the future. I merely suspect that unless significant progress is made outside scaling relatively soon, AI will be perceived as having undershot expectations until such time a new “big” strategy comes out. No one knows when that will happen.

But I don’t know. I don’t know if I’m right about scaling. I don’t know to what extent significant alternative strategies work or are underway. I do know that whatever happens, posterity will say that it was obvious. It is not, we face radical uncertainty and should be honest about that.

A warning regarding Mechanical Turk studies and similar

A paper I read recently had a test-retest measure over a long gap of political attitudes for two separate experiments. Experiment 1 was with a general population sample, Experiment 2 was Mechanical Turk users. Sidenote, but in this period of history it’s bizarre no one has forced them to change that name yet.

There was much less change between the two time periods for the MT users. The authors suggested a compelling explanation. MT users fill out surveys all day. They have likely been asked about their political attitudes before. They are not just making up a number on the spot. Thus the answers they gave concerning their political attitude changed less, because there was less randomness in how they picked those numbers.

However, I noticed this has disturbing implications for experimental studies with MT users. Suppose you have some hypothesis of the form:

Experimental manipulation X will promote Y pattern of responding on Z question.

Suppose that your respondents have seen Z question many times before, or questions like it, and thus already have an answer in mind. It is possible that your experimental manipulation will work drastically less well, or not at all as a result.

Of wrongdoing and statistics

This section has been retracted due to an error with my included toy figures and will be restored once I’ve unscrewed them.

My idea for a big Terror Management and Politics study

Ane existing literature proposes that the salience of death may affect stated political views- e.g. seeing a dead body on social media might make you more likely to give conservative answers about same-sex marriage. The status of this literature after the replication crisis is unclear. Many existing studies are underpowered. The internet, however, makes it relatively cheap to run big experiments. As far as I can tell, no one has yet run a large experiment on the topic.

If I can rustle up the 4000 dollars or so I need [or, ideally, find free participants for the reasons previously stated), the proposed experiment will work as follows. 2000 Participants (number based on power calculations) will be randomly assigned to one of two groups. In group 1, participants will complete, after a series of demographic questions, a series of questions about death intended to make death salient. Within reason and the bounds of good taste and ethics, every effort will be made to confront the participant with their own mortality. They will then complete a series of questions about their political attitudes- both overall and on cultural and economic issues. In group 2, participants will complete the same set of questions but in reverse order- political questions before death-related questions.

The primary dependent variable will be a 0 to 10 self-report scale of left-right affiliation. If there is a difference of at least a sixth of a standard deviation between the two groups, the question will resolve in favor of the appropriate option. Given the sample size, such a difference will be greatly statistically significant (P=<0.001). On the other hand, if there is a difference of less than a sixth of a standard deviation (a difference I judge below the threshold of practical significance), the question will resolve as per the appropriate option even if the difference is statistically significant.

Some other significant questions I want to test are:

Are the effects mediated by religious belief?

Does the terror manipulation affect stated religious beliefs?

Is terror polarising- does it move the left left and the right right?

Are there bigger effects on cultural questions than economic questions?

Given the cost of the experiment, I will be consulting with people with knowledge of the area before running the experiment and will aim at something of an adversarial collaboration- taking on advice from both skeptics and believers in the relevant phenomenon.

One idea I have for comparison is an invocation of the immortality condition. A group of people are asked to imagine what it would be like to be a superhero- what powers they would want, what glamorous appearance they would choose, what their superhero name would be. They are also told that part of their suite of mystic abilities will be agelessness and something close to invulnerability (can’t actually make them invulnerable, that would be a ridiculous superhero which would break them out of suspension of disbelief).

Thoughts?

Is character just one’s overall tendency to do culpably right and culpably wrong things? Not as far as ordinary people are concerned.

Imagine two people. Justin assaults people on the street when he gets drunk because life is frustrating. Mercia is a prosecutor, and she pursues cases that justice, and sometimes law, indicates she shouldn’t for career reasons. She understands she is doing this, and isn’t at risk of losing her job if she doesn’t. I think it’s clear that both Mercia and Justin might well have equal inclinations towards knowing evil, with equally poor excuses. If anything, I am inclined to say Mercia is worse. Yet most people, I think, will instinctively revile the character of Justin far more- probably even if there are mitigating factors from Justin’s past, but none from Mercia’s.

Is this a banality of evil effect? No, I don’t think so.

Consider a post-apocalyptic Mad Max-style raider. Metaphysiocrat has a character called Blooddrinker Bigfuck. I asked ChatGPT to depict him:

Blooddrinker is a raider in a post-apocalyptic wasteland so as you can probably imagine he’s a real bad guy. We won’t go into details, but I’m sure you can guess much of what’s on the list. If he were real, I can imagine the sympathetic biopic, My fellow gay guys on Twitter “ironically” swooning over him, the thinly veiled depictions in romance novels, the book-length psychological treatments- not as a study in monstrosity, but in greatness. The self-help books Manage Your Staff Like Bigfuck. Now compare him to Justin. Certainly, people will intuitively feel he’s of better character than Justin, yet there is nothing banal about his evil.

Is it all just status? That’s a big part of it, but not all of it I think.

But thus far I’m not convinced that I’ve made my point. I feel like I’m losing this argument with myself. It might seem like I’m just banging on about how high-status people who achieve X level of badness are more respected vis a vis their character than low-status people who do the same, no shit! But I really do think this goes deep into our evaluation of character in a way that is startlingly difficult to root out. I’m not even sure there is an evaluation of character after its gone.

Let me try to illustrate what I mean by it not just being a “mistake” or “bias”. I do think it is both a mistake and a bias in a lot of senses, but this doesn’t capture how far this one goes. Imagine a social circle of socially conscious vegans. They are considering two people.

Jerrow has been loudly denounced for doing, for want of a better word, soft metoo stuff. Multiple partners have stated that he is emotionally manipulative, a real shit boyfriend. He hasn’t exactly been accused of abuse, but people aren’t happy at all and he doesn’t deny any of the allegations. Now consider Wenes. Wenes is an Omnivore. Wenes knows all the reasons he should be vegan. He even admits on some level he should be vegan but he just… isn’t. I posit that almost any social circle like the one I described will- so long as they are impartial and have no prior interaction with either person, gravitate towards judging Wenes of better character than Jerrow. Even if you point out to them, that Wenes does more knowing harm, they won’t regard this as an inconsistency- they’ll try to defend their assignment via some bit of reasoning, the content of which will vary from person to person.

Beyond banality and status, there are a few other factors that downweigh people’s assignment of evil characters for evil actions. Character, as generally assessed, weights deeply on:

Tendency to engage in wrongdoing which is a threat to me and my social circle. and

Tendency to unpredictably engage in wrongdoing which is a threat to me and my social circle

My sense is that there is a profound human tendency, even among educated people, to assign character judgments partly on the basis of factors like those we’ve discussed. I suspect that we have a whole mental system setup to evaluate “character”, and this system operates by its own rules and logics, rules and logics that, when spelled out in terms of measures of wrongdoing, sound fucked up.

I think further, that this is a threat to virtue ethics, at least in practice, because it’s not just a bias you can dispense with through awareness. It’s profoundly integral to our concept of what it means to have good character, and even if you point it out to people, they will fight to defend it in many cases- e.g. Jerrow & Wenes. This means that virtue ethics is always going to have a somewhat elitist character, and as well as a somewhat “what’s good for the people nearest to me” character. Much more so than moral judgments in general.

It’s not even clear that what is going on here, in all cases, is a mistake. Of course, one could take a kind of Thrasymachian line and say “Yes! Status and relationship to the ingroup should be part of our moral evaluation of others”. I, naturally, do not like this line. A softer option would be to say that our concept of character is not, primarily, a tool of abstract moral evaluation, but instead a pragmatic yardstick by which we orient ourselves in relation to others. This may well be true, but it seems to me that people are delighted to move back and forth between moral and pragmatic senses of character evaluation quickly.

Hurry up and make a utopia simulator

What kind of city would you like to live in, if you lived in a post-scarcity world? What city would you build for the people you most loved, or for everyone, if only you loved them enough?

Most city simulators are centered on survival, and on processes of accumulation- you get money, and then that money is reinvested to build more buildings etc. But when people are given scope to create anything at all, often what captures our imagination is joy. Who, when playing Pharaoh or something like that has not made a city with lavish entertainment everywhere, with endless parks, baths, and wonders? What if there was a game entirely about that?

I want to play a game without material limitations, in which the goal, to the extent there is any goal, is to synergistically combine the good things in life in order to create incredible experiences for the inhabitants of the city. For those who need it, points can be given for synergistic combinations of experiences and well-structured cities. Yet I imagine that for many players, the fun will be free creation. On the surface, vast wildernesses, with cities floating above them. Cannons use inertial dampeners to safely fire you from one side of the city to the other. Light shows fill the sky. Moving blocks allow endless rearrangement.

If well designed, this could be, for want of a better phrase, a very philosophical game. How often do we confront the question what do you really want? Do you want a world focused on companionship, adventure, learning or…?

People are excellent at avoiding the question- we mustn’t talk too much about what utopia looks like because the people will decide that after the revolution. We mustn’t talk too much about what utopia looks like, because that must be a collective choice (aren’t you part of the collective?). We mustn’t talk too much about what utopia looks like, because it is vain to think post-scarcity is possible in the near future.

Well I’m sick of that, and I want to play a utopia builder. I want us all to play one, because being clear about our ultimate goals beyond banal limits will make so much else clearer.

> We know that someone participates in at least one bad activity but know nothing else about them. What is the probability they are a bastard?

>

> It’s quite low. About 20% I think.

No, it's over 50%.

You said that 10% of the population participate in each bad activity, and 90% of those are bastards.

With 20 bad actions, the absolute maximum number of non-bastards taking part will be 20*10*10% = 20% of the total population. In reality it will be noticeably lower because of overlap.

But practically all of the bastards will have done at least one bad thing. Their chance of doing any individual bad thing is 9%/20% = 45%, so with 20 independent things the chance of doing none at all would be (1-45%)^20, or less than one in 100,000. So the number of bastards in your "at least one bad thing" sample is roughly 20% of the total population.

Some remarks, part by part:

> “Hamas deserve our unconditional support. Not because I agree with their strategy – complete disagreement with that – but the situation at hand is if you have no hope … nothing can justify what has been happening to the Palestinian people for 75 years.”

Unfortunate phrasing indeed. It seems, from the line about strategy that the support is not actually unconditional, and one must wonder what, concretely, support means given a "complete disagreement" about strategy, but however much the quote may be confused or contradictory, it is much less heinous than it first appears.

> The strongest reason against her expulsion is this. She would not have been expelled if she had been talking about the Israeli government in exactly the same terms:

This does not seem, in itself, to be an especially compelling argument against the expulsion. If one disagrees about whether weakness is an exculpatory factor morally (as I hold one should) the absolute magnitude of the harms is not especially relevant compared to the degree of wickedness of the actions taken. I would guess that the university administrators probably see the flipped statement supporting the Israelis as orders of magnitude less troublesome than the actual statement, but then the interpretation of that depends on one's judgment of the overall conflict, so the symmetry argument really just reduces to an argument about the overall moral nature of the conflict.

In the end, however, I have enough support for freedom of speech (your point 3) that I likewise highly disapprove of the expulsion despite my lack of endorsement of the symmetry argument, so in the end I suppose we end up in similar places with respect to the issue writ large.

On the spiritual reckoning (or lack thereof): it's good to see explicit noting of the rather bizarre implicit rhetorical move that seems to get made where it's seemingly taken as a background fact that "I disagree morally with Silicon Valley idea clusters ⇒ AI won't be significant".

> In the debate about how good AI will get, a lot of people are making a much more sophisticated point, namely that a logistic curve looks like a sigmoid curve until it doesn’t.

Should this be saying "an exponential curve looks like a logistic/sigmoid curve until it doesn't" or similar? The logistic curve is a sigmoid curve, so the sentence doesn't really make sense as-is.

> If anything, I am inclined to say Justin is worse. Yet most people, I think, will instinctively revile the character of Justin far more-

Should this say "I am inclined to say Mercia is worse"? The "yet most people" following it seems ill-fitting if you're actually agreeing with the hypothetical majority.

Another remark on the character judgments is that people may more readily form negative judgments about concrete, familiar harms (e.g. drunken assault, emotional manipulation, etc.) than about abstract or novel ones (e.g. tax fraud, meat-eating, etc.). Even though they would, if pressed, rate the harms as similar, the former categories are more emotionally compelling.

The note on designing utopias brought to mind the old Fun Theory sequence (https://www.lesswrong.com/posts/K4aGvLnHvYgX9pZHS/the-fun-theory-sequence). Have you read it? It covers some interesting ground on the topic of the elements that would actually be necessary for a good-to-live-in utopia.