When the board of OpenAI moved to fire Sam Altman, much of Twitter leaped to his defense. Their narrative was that the board was composed of busybody know-nothings committed to abstract ideals, poised to sink a company with the potential to deliver real growth. The terse statement of the board that Altman’s behavior had raised questions of honesty attracted particular ire. The board members were subject to vitriolic criticism and at the risk of sounding precious, some of it was in my view quite sexist. For example, it was alleged that Helen Toner was not qualified for the board, because she had relatively little academic industry experience, and her highest qualification was a master's in security studies. What this ignored, among other things, was her impressive record of journal articles and other publications.

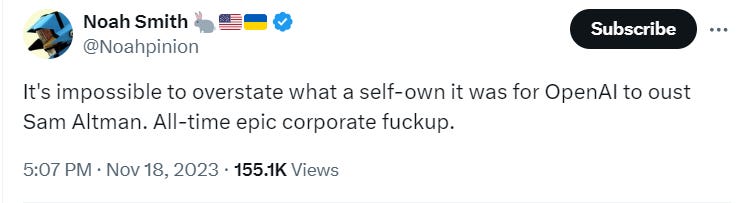

A lot of this seems to have reflected Sam’s status as a popular figure on Twitter. Some of the people pushing the pro-Altman stuff were ideologically committed accelerationists who saw the board as safetyists, but many were not. Many were just generic Twitterati who felt fond of Altman for some reason.

Now many of the same people are calling for Altman’s head because:

The superalignment team has disbanded and Ilya Sutskever, himself a figure of cult popularity, has left.

It was revealed that staff members upon leaving were required to sign a lifetime non-disparagement agreement to keep their equity

It appears that OpenAI may have deliberately used a voice actor with a voice similar to Scarlett Johanssen after Johanssen herself refused to be the voice of ChatGPT-4O.

In light of all this, people are taking the old board’s accusations of dishonesty more seriously.

The various Twitterati now turning on Altman could have avoided being in this contradictory position if they’d just recognized that they knew nothing of Altman, Toner, Sustkever, etc. Instead, they chose to intervene in a process they didn’t understand.

If the fight at OpenAI had been ideology directly- with Altman advocating acceleration and the board deceleration, then I can understand why people would- risky as it is- want to get involved. But it wasn’t a fight about ideology, it was a fight about specific, internal questions of ethics. People wanted it to be a fight about acceleration versus deceleration, or a fight about anti-progress woke bureaucracy handwringing DEI brahmins standing in the way of engineers who Actually-Get-Things-Done-dammit, so they imposed that logic on it. Maybe in some ultimate sense, it was a fight about these things at the root- I don’t know! You don’t know! But Twitter wants to stamp the hot wax of every conflict into the seal of the culture war.

I don’t know whether Twitter’s intervention in favor of Sam Altman did anything. My guess- and I emphasize I’m speaking from ignorance here- is that it was the staff revolt and threat to leave en masse that broke the board. It is perhaps possible that the revolt on Twitter and other social media against Altman’s firing may have emboldened the staff rebellion. Regardless, this much is true of Twitter, They chose to throw rocks on the basis of vibes.

Indeed, there’s a kind of perverse mechanism here that rewards seeming confident about something precisely in inverse proportion to how much the public really knows about it. This confidence can make you seem like an insider or a world-weary observer who’s seen it all before and can guess the real factors in play.

I cannot emphasize enough that you do not know, in any sense, the niche celebrities on your phone. You cannot arbitrate their disputes. You should think four times before making a judgment about which of them is right. Even if you happen to be right, the situation is almost certainly richer and stranger than you think.

Humans often think we can evaluate interpersonal relationships, but things like policy direction, etc., etc. are really hard. I think the opposite is true. You’re far more likely to form a fair-minded opinion on what an organization is doing than form a fair-minded opinion on the individual rights and wrongs of those involved.

I’m inclined to think that the latest stuff about Sam probably is a bit damning, but that’s only a hunch. Consider, for example, Sam Altman’s claim not to know that policy required employees to sign a non-disparagement agreement upon leaving OpenAI. It sounds dubious, doesn’t it? Surely a CEO would know that? I agree it’s doubtful- but I don’t know. As someone who once headed a medium-sized organization, I’d say it’s at least possible he didn’t know. I’m sure that OpenAI’s lawyers are getting people to sign stuff all the time- lawyers are like that. It seems at least possible that he might have either not been told about it, or more likely, waved it through as part of a large package of measures that he didn’t go through individually. Think about how much you can be aware of at any one time. Now, CEO’s of course use every trick in the book to have the largest possible scope over their own companies, and they do work to a degree, but ultimately, they only have as many hours in the day as you do. The awful truth is that you can’t read, let alone carefully read, everything you’re supposed to be on top of as a large company executive. If you put a gun to my head I’d say Altman probably was aware of the non-dispargment agreement, but I don’t know and neither do you.

If you want to call for Sam’s firing because you’re dissatisfied with the record of OpenAI at this point, or call for him to keep his job because you are satisfied so be it. All I’m saying is this- don’t imagine though that you know who he is, or the nature of his relations with others. It’s silly enough to have a parasocial relationship with a podcaster, to have one with a CEO is laughable. Sam ain’t your mate. Between the distance, and all the layers of impression management you know virtually nothing. A lot of the dudes who rushed to Sam’s defense probably think they’re above celebrity gossip, they’re not. In truth, you probably have a much better chance of grasping something of the soul of a favorite actor or musician than a favorite CEO.

Rather than assessing whether or not Altman is a good guy, or even focusing too much on specific scandals, I’d suggest taking a structural view. Altman, and many of those opposed to Altman, claim that OpenAI is building a techgod. I would suggest that, if a company thinks they are building a god, then regardless of whether they are right or wrong, it is appropriate to apply controls on the assumption they are right. That means getting the structure correct. The whole point of structures is to avoid too much reliance on ‘good’ people and create systems that are robust, even as bad and/or incompetent people become involved. A board that cannot control its CEO because that CEO leverages independent popularity with staff and the public is not well structured for building gods. It depends too much on the discretion of one man.

Marx was right about this- capital acts like it has a will of its own. Capital wants to grow- it arises out of the behavior of humans but has its own logic. Like water going downhill, or like fire blindly seeking fuel, capital will always try to subvert barriers to its growth. This only becomes more and more true as the sums increase:

Capital eschews no profit, or very small profit, just as Nature was formerly said to abhor a vacuum. With adequate profit, capital is very bold. A certain 10 per cent. will ensure its employment anywhere; 20 per cent. certain will produce eagerness; 50 per cent., positive audacity; 100 per cent. will make it ready to trample on all human laws; 300 per cent., and there is not a crime at which it will scruple, nor a risk it will not run, even to the chance of its owner being hanged. If turbulence and strife will bring a profit, it will freely encourage both. Smuggling and the slave-trade have amply proved all that is here stated.” (T. J. Dunning, l. c., pp. 35, 36.)

A lot of people involved in OpenAI think returns of greater than a million percent are ultimately possible. Right or wrong imagine what they might do.

You should not want the short-term maximization of profit to dominate among people who- from CEO to scientists, seem to believe they are building a god. This is commonsense. Even if you are 99% sure they are wrong. I’m far from sure they’re wrong, but even if I was sure they were wrong, I still wouldn’t want a company like this to lack effective oversight. A cabal of would-be god-invokers with enormous economic influence and no effective control structure capable of standing up to the CEO could do vast damage.

In summary

You don’t know the character of the personalities disputing with each other on the internet. They are not your friends. You should avoid weighing in on their interpersonal disputes if you can at all avoid it.

As far as you can, you should judge organizations not by personalities, but by their policies, and control structures.

I don’t know whether Sam Altman or the board was right about accusations of dishonesty. I do know is that- whether OpenAI is or is not building a god machine, they are not the sort of organization that should be the personal fiefdom of a CEO.

"An ursine Leviathan, its halo studded with a range of arcane symbols, including one snowflake and several sea urchin fossils, looming over a naval battle fought by yachts, dhows, junks, and moose-riders among the surprisingly well-preserved ruins of a flooded London, while three-winged eldritch horrors circle overhead. Dichromatic woodcut."

But we know all about Kate and Meghan...