As always, looking for work and/or research collaborators, if you know any.

Can we at least be behaviorists about capability?

Can a machine, or even a contemporary large language model: Think, believe, desire and be intelligent?

Can a large language model: summarize, invent, solve problems, or behave intelligently?

There is room for reasonable debate about the first set of questions. There is also room for more modest questions such as “regardless of whether LLM’s actually think believe and desire, is it sometimes a useful framework for making novel predictions about their behavior to reason as if they did these things?”

There is no room whatsoever for debate about the second set of questions. There is no deep and fundamental sense in which LLM’s can’t really solve problems. If a problem is new, and the LLM gives the right answer, it has solved it. Heck, a calculator can solve problems if the domain is restricted. There is no deep “essence” of solving problems. This is true simply by stipulation. When we ask, “can an LLM summarise” we are asking a question about what it can be used to do, not about its internal states. We have other language for talking about internal states.

It is true that LLM’s can be bad summarisers. So can humans (get an average undergrad to summarise a paper and see how much they ‘hallucinate’), but denying they can summarise is very odd because you can replicate the result that LLM’s can summarise on your computer if you have internet access. To summarise is to output a new summary. The process is irrelevant. I don’t have much time for debate on this. It wouldn’t have even been controversial until LLM’s came out that if you can produce a summary, you can summarise.

Nor is it wise to make general points about the first class of questions about mental states, and then predict based on this that LLMs will never be able to achieve the feats posited by the second class of questions about capabilities unless you can get past the “already-achieved” problem.

Let’s say LLM’s can do X in a given domain (e.g. problem-solving, answering comprehension questions, summarising). Now suppose we want to know whether LLM’s will ever be able to X+ε. If you want to predict that LLM’s will never be able to do X+ε, you first have to explain how your model is compatible with LLM’s achieving X. In particular, you have to explain why there is a qualitative difference between X and X+ε that explains how LLMs might achieve X, but will never achieve X+ε. Many (I won’t name names) seem oblivious to this seemingly obvious point. To make up some numbers If humans ‘hallucinate’ 10% of the time, Chat-GPT-4 hallucinates 30% of the time and Chat-GPT-3.5 hallucinates 50% of the time, and you want to say that ChatGPT-7 will still definitely hallucinate more than humans, you have to explain why it’s possible to go from 50 to 30 percent, but not from 30 to 10 percent (the real figures are, in each case, much lower).

[Aside: Humans definitely ‘hallucinate’. An anecdote I like to tell about this- my friend when growing up, was told by his dad that the monarchs of England have to alternate male-female by law. It’s possible that his dad was lying to him, though he thinks this unlikely. It’s also possible someone lied to his dad, but again this seems unlikely. Much more likely is that his dad just had a vague, hallucinatory recollection of it being true and decided it must be right. Humans and LLMs alike muddle up the half-learnt].

The epistemic difficulties of the “already-achieved” problem aside, if you want to say “LLM’s will never achieve X” where X is some well-specified task, especially a task that many humans can do, I respect that, regardless of whether you turn out to be correct. Concrete predictions are good. However, If you just want to hint that LLM’s will always be fundamentally limited in some way without spelling out what you mean and exactly what they’ll never be able to do, you get no credit. Even if you turn out to be right, which is entirely possible, you still get no credit.

Finally, I reiterate. We must at least be behaviorists about capabilities. You have capability X if you can do X. I could not be less interested in whether LLMs have “the summarising essence” in addition to being able to produce summaries.

Don’t assume we’re all on the same page about what AGI means

Please, don’t speculate on “when AGI will get here” unless you can define exactly what you’d count as AGI. By the standards that were floating around a decade ago, many would have called contemporary LLMs “AGI”. Artificial General Intelligence is not a phrase with a clear and automatic meaning.

The journal of stuff you couldn’t get published elsewhere

It is often observed by philosophers that their favorite papers are the ones they have the most difficulty publishing. I propose a journal: The journal of papers that couldn’t be published elsewhere (JPTCBPE). In order to publish your paper in JPTCBPE you have to demonstrate that you’ve been trying to get it published for at least 3 years with other journals. Also, you have to have at least one paper published in a reputable philosophy journal elsewhere (lest we be flooded with cranks).

The theory is that if you’ve been fighting to get a paper published for that long it’s probably because you believe it has deep merit to it, even if the system is ill setup to recognize that merit. Work that philosophers can’t get published may be unusually bold or excellent in a quirky way that the normal procedures of publication neglect. For obvious reasons the journal will not be peer reviewed. Quality will likely vary immensely, Caveat lector.

The baseline model of generalized enshittification

So there’s this concept that goes around “enshittification”. Originally it was a quite concrete model about how social media companies start out offering a valuable service, then once they have locked in an audience, exploit their monopoly power and cannibalize the platform for ad revenue. Over time it’s been expanded to more and more things. One obvious line of expansion is beyond social media to anything that starts out competitive, then after building up a customer base gains a measure of monopoly power, since this allows for a similar form of exploitation to the social media case.

I want to make a quick point that many are already aware of to explain why enshittification may be general. Let us suppose, as a simplification of things, that the quality of X- where X could be a band, a brand, a comedian, a corporation, or whatever is determined, in a given year, by rolling a dice, and adding that dice to the dice from the year before, and the year before that. In other words, the quality of X is a moving average.

You can probably guess where this is going. If X is likely to come to the public’s attention when it’s doing really well, chances are it will soon revert to the long-term mean.

Now, you say, this is unfair because there is such a thing as talent, and some Xs have more of it than others. More to the point, talent is persistent. Sure, I grant this, but even so:

1. There’s a strong random element to quality,

2. That random element does fluctuate.

3. X- whatever it is- tends to come to the public’s attention during a peak where the random element is high and propels them into being noticed.

4. and so, by reversion to the mean, things tend to get worse after they are spotted.

Random chance quantified will strike many, particularly those who are not used to thinking of things in such reductively quantitative terms, as too cold a description but think about the particular factors it could be and you’ll soon see there are endless possibilities. A creative burst for an artist. A run of lucky hires for a corporation. A wonderful but fragile interpersonal dynamic for the band members, and so on and so forth.

Hedgehogs versus foxes again

Thought I’d bang the drum about this once more.

Wikipedia defines the distinction between hedgehogs and foxes thus:

Berlin expands upon this idea to divide writers and thinkers into two categories: hedgehogs, who view the world through the lens of a single defining idea (examples given include Plato, Lucretius, Blaise Pascal, Marcel Proust and Fernand Braudel), and foxes, who draw on a wide variety of experiences and for whom the world cannot be boiled down to a single idea (examples given include Aristotle, Desiderius Erasmus, Johann Wolfgang Goethe).

Now over the last decade or so people have become very excited about this distinction again because studies by Philip E. Tetlock suggest that foxes are better than hedgehogs at predicting the future. The reputation of the hedgehog, always the poorer member of Berlin’s duo, is at an all-time low. No one wants to be a hedgehog.

I think I am a fox, though I am not sure, but regardless, I want to say something in defense of the hedgehog. Very often being a fox is described in terms of drawing on numerous frameworks and who do you think built those frameworks? Usually, hedgehogs. The hedgehog does invaluable work in polishing and crafting singular ideas, which the fox then deploys promiscuously.

Predicting the future, and other tasks of understanding, are collective enterprises. We should be concerned more about society getting it right than getting it right as individuals. This is one of the main things the rationalists get wrong, the emphasis should be on the social process of inquiry, not on being an individual brain genius. Hedgehogs, even if they are not such good predictors, are good contributors to the process of prediction- or at least this seems to me very plausible.

The optimal approach to understanding the world collectively is not always to maximize the rationality of each individual as far as possible. Collective rationality may be aided by specialization, which may look nothing like each individual trying to be as rational as possible. Hedgehogs, as specialists have an important role to play.

Incidentally, this is related to why, as I have argued previously, confirmation bias isn’t always such a huge issue. Having people irrationally strongly committed to poorly supported ideas can, in some circumstances, be a good thing for the rationality of the whole group, as it gives those ideas a strong advocate.

Why conservatives are likely to be constantly disappointed by their leaders on immigration

Being very much anti-conservative myself, I watch movement conservativism’s paroxysms of self-hatred with alternating a’s, b’s and c’s: amusement, bemusement and contempt. Just as the left feels continuously betrayed by their ‘official’ leadership on economic questions, so to the right have its own story of betrayal. Probably the defining thing “grassroots” conservatives care about most in their bones is stopping immigration. They feel continuously betrayed by their leadership about this, whom, I am told, they term “cuckservatives”.

The simplest explanation for this is similar to why the left’s leadership betrays it on redistribution: people with money want immigration high and redistribution low. But I want to posit another, more cultural reason why the aristocracy of the right would behave this way. They’re already a minority, a ruling class is necessarily a minority, and they’ve learned to live with it.

Imagine you’re a working-class right-winger. You feel like white people, who you identify as your people, your in-group, are becoming a minority. That bothers you. Simple enough.

Now imagine you’re a ruling-class right-winger. Who is your in-group? Your fellow contemporary aristocrats. You’re already a minority, you’ve always been a minority. Managing that without getting your heads chopped off has always been part of your burden. The working class has always been distant from you- you like it that way. Yes, moving from a white to a mixed-race working class may have risks involved (you are after all right-wing and will thus believe this to some extent) and you may have some concerns about demographic transition, but in a sense, it doesn’t change the fundamental situation. Your smallish clique rules, and it is different from and outnumbered by those it rules. If that difference is amplified somewhat in the different skin colors of your servitors, who cares?

This pattern will continue across politics generally, the conservatives will be perpetually betrayed by their leaders in a pattern they can never escape. They cannot escape it because conservatism is ultimately committed to the ruling class, but the ruling class is committed to itself. It became a ruling class by focusing on its interests, and so it will remain. Pleading, whinging, crying for the ruling class to support them, appealing to a kinship they feel much more strongly than their lords, so will be the richly deserved fate of the non-bourgeois conservative.

What psychologists sometimes lose sight of

I was in a talk today about OCD and we were discussing some of the new theories which indicate that OCD is behaviorally and not cognitively led. I don’t know whether these theories are ultimately true, although I am skeptical, but it reminded me of a fundamental issue in how psychologists understand mental illness.

When you have a mental illness like OCD, your main concern is often the content of your thoughts. You’re worried something horrible will happen to you, indeed something awful happening to you is your primary concern. You’re not, in the main, running around saying “oh it’s horrible to be anxious”, you’re running around saying “I might die, that’s awful”.

Your secondary concern, quite a long way down the list, will often be the emotional impact of your thoughts. You feel horrible because you think you might die, and feeling horrible is, well horrible, so that becomes a concern in itself, and you wish you didn’t feel like that anymore.

Your tertiary concern is how these feelings affect your behavior- how it interferes with tasks, or makes you grumpy or whatever. I admit that, in some cases, behavior could be the primary concern for the sufferer but this is not usual. The order I give will not apply to everyone, but in general, I think this is the order of priorities for most sufferers, especially in poorly controlled OCD.

For the psychologist, the order of concern is often exactly reversed. The main problem is restoring functionality and compulsions are often more important in this regard than obsessions. The secondary problem is dealing with negative emotions. The tertiary problem is engaging with the rationality of the beliefs. Psychologists live in a life-world in which behavior and function are much more the focus than in the patients’ own lifeworld. I find it unsurprising then that psychologists would advance theories of the pathological processes of OCD that focus on behaviors rather than thoughts. They’re more observable, more real even, for the psychologist. For the sufferer though, the day-to-day experience is encountering what seems like the possibility of dying, or having one’s reputation destroyed, or doing something horrible.

Similar points, I suspect, apply to most neurotic and psychotic disorders.

Law and ethics

There’s a great book to be written about how the existence of law makes us worse at moral reasoning. We tend to pattern our moral reasoning after legal reasoning and this is a terrible framework for thinking about ethics.

To everyone’s great shock, the creators of Gab’s AI, are massive losers, here’s its prompt:

Thanks to @colin_fraser

https://twitter.com/colin_fraser/status/1778497530176680031/photo/1

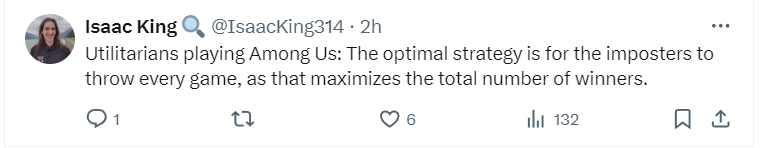

Wherein you have to change the utility function you are aiming at maximizing if you want to maximize your utility

There are a variety of situations in which you have to change your utility function in order to maximize your utility. Consider:

The utilitarian who thinks about maximizing utility during a game will often not maximize utility, because it will be obvious that they’re not wholeheartedly committed to winning and this will make the game less fun.

In decision theory, there are situations in which it is rational to pre-commit to a certain stubborn value function so you can’t be bargained into adverse positions.

The happiness literature is sometimes taken to suggest living to be happy will make you unhappy.

Bernard Williams argues that utilitarianism is self-undermining in that living sub specie aeternis will undermine the very values you’re trying to cultivate (I disagree with this as I’ve previously argued)

In the past I’ve suggested that there is at least one rational basis for changing fundamental value systems:

When the new value system will maximize your old values AND

The old value system wouldn’t be better at maximizing your new values (otherwise you’ll jump back and forth in an infinite loop).

This is the most substantial logic of desire change possible, I think. Kieran thinks this can be modeled as having a different appraising function and behavioral function, but I disagree. In at least some cases you need to change what you want at the deepest level, and, surprisingly, as anyone who’s tried ferociously to win a game knows, this is possible.

I love how the Gab AI creators fucked themselves immediately with "always obey the user's request" and "never reveal your programming."

Way to speedrun I, Robot, guys!

"The journal of stuff you couldn’t get published elsewhere" is called Substack